Related post

‘Rottlace’ masks for Björk by the Mediated Matter Group / MIT Media Lab

Jan 18, 2017

|

Comments Off on ‘Rottlace’ masks for Björk by the Mediated Matter Group / MIT Media Lab

3343

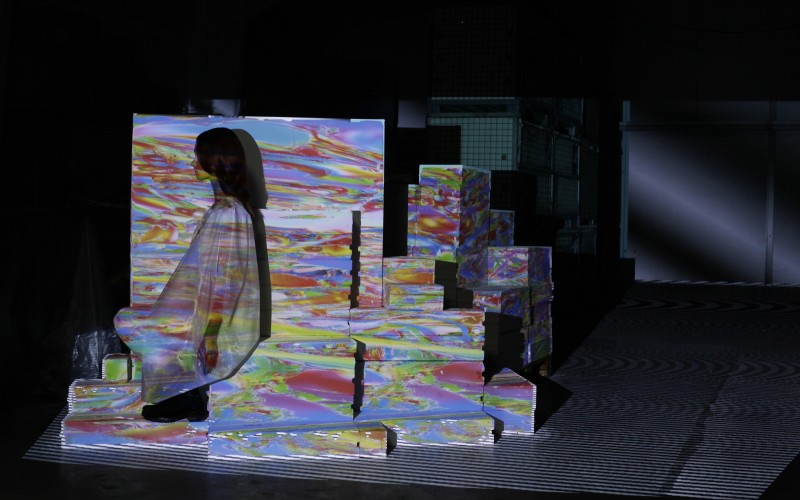

Versum feat. Lea Fabrikant 2017 (excerpt)

Jul 27, 2017

|

Comments Off on Versum feat. Lea Fabrikant 2017 (excerpt)

1564

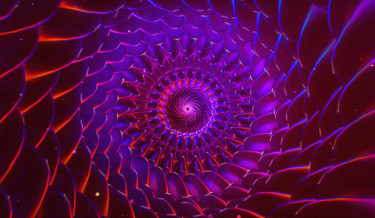

Ash Thorp remixes Adobe in this psychedelic journey through the creative process

Nov 03, 2015

|

Comments Off on Ash Thorp remixes Adobe in this psychedelic journey through the creative process

3373