Related post

Blood Pours from Facial-Mapped Projections of Venetian Noh Masks

Apr 21, 2017

|

Comments Off on Blood Pours from Facial-Mapped Projections of Venetian Noh Masks

2109

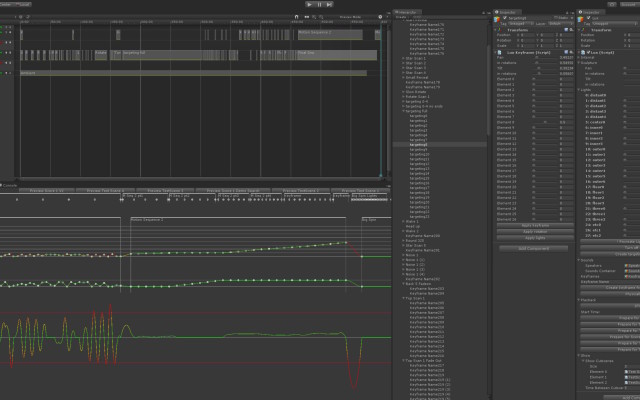

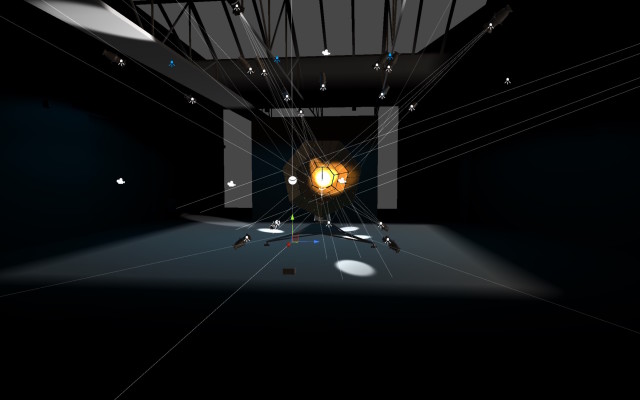

RECONDITE – SKULL – 3D PROJECTION

Jan 28, 2015

|

Comments Off on RECONDITE – SKULL – 3D PROJECTION

4274

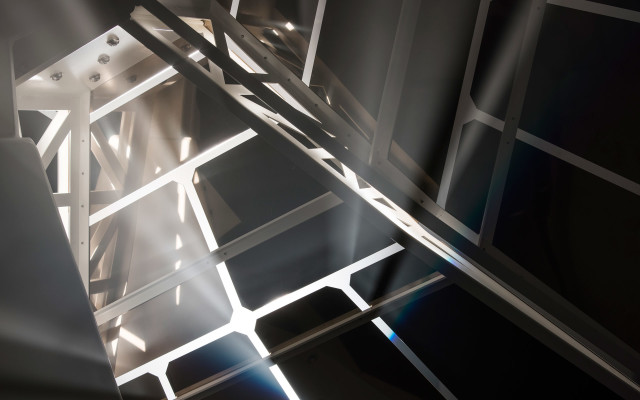

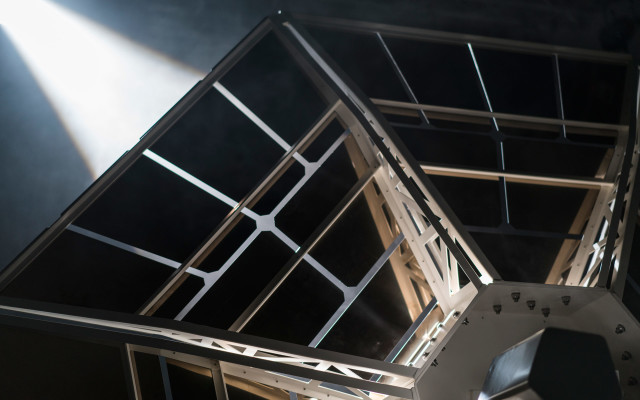

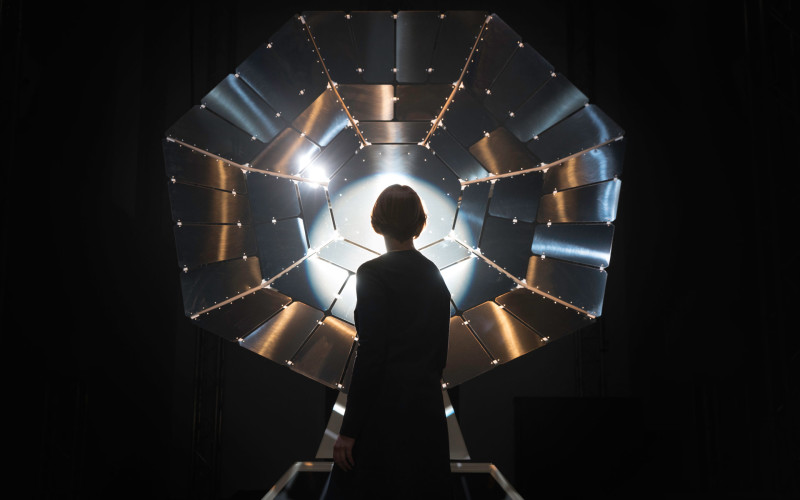

Ouchhh: AVA V2 Installation in Paris

Jan 18, 2017

|

Comments Off on Ouchhh: AVA V2 Installation in Paris

2886