Related post

AURA, A LUMINOUS EXPERIENCE IN THE HEART OF MONTREAL’S NOTRE-DAME BASILICA

Mar 07, 2017

|

Comments Off on AURA, A LUMINOUS EXPERIENCE IN THE HEART OF MONTREAL’S NOTRE-DAME BASILICA

3017

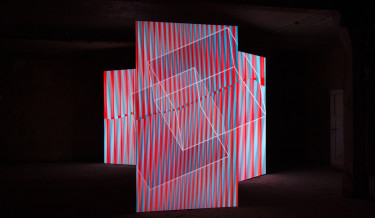

Perspection – Anamorphic image and sound synthesis across multiple screens

Nov 13, 2015

|

Comments Off on Perspection – Anamorphic image and sound synthesis across multiple screens

3634

Become a Dust Particle in This VR Performance

Apr 21, 2017

|

Comments Off on Become a Dust Particle in This VR Performance

1970